Aristotle warned that law without moral purpose becomes mere force. Twenty-four centuries later, the same truth applies to algorithms.

The Regulatory Moment

The world is living through the most significant regulatory intervention in the history of artificial intelligence.

On August 2, 2026, the EU AI Act reaches full enforcement for high-risk systems.

Penalties for noncompliance can reach 35 million euros. They can also go up to 7 percent of a company’s global annual turnover. These penalties exceed even the GDPR’s bite.

The Act classifies AI by risk. It bans social scoring, workplace emotion detection, and untargeted facial recognition scraping. It mandates transparency, human oversight, and technical documentation for high-risk systems in healthcare, law enforcement, and credit decisions.

These are serious, necessary steps. But they are not sufficient.

Because the hardest problems in AI governance are not technical. They are moral.

The Compliance Trap

Compliance answers a narrow question: Does this system follow the rules?

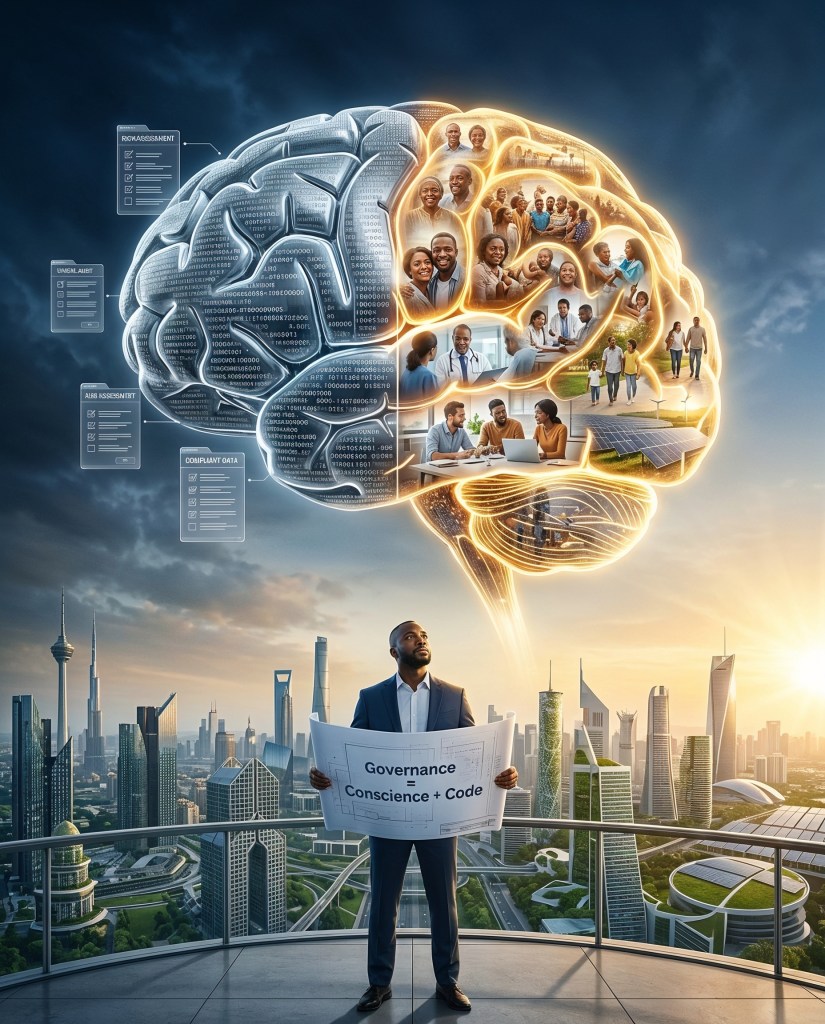

Governance answers a harder one: Does this system serve the people it touches?

One is a checklist. The other is a conscience. And too many builders — and too many regulators — are confusing the two.

An AI credit-scoring model can satisfy every regulatory requirement and still systematically exclude the entrepreneurs it was designed to serve. A medical diagnostic tool can pass every audit and still fail patients who live in settings the designers never visited. A hiring algorithm can be transparent, documented, and technically sound — and still encode the biases of the data it was trained on.

Compliance without moral architecture is a house without a foundation. It looks solid until the ground shifts.

What Building in the Field Taught Me

I did not learn this in a classroom. I learned it in pharmacies.

At RxAll, we built an AI-powered scanner. It uses spectroscopy and machine learning to authenticate medicines. This work has helped remove 1.3 million counterfeit medications from supply chains and serves millions of patients monthly. We are a Fast Company World Changing Ideas 2025 honoree. Our RxScanner is deployed across thousands of pharmacy locations.

But our first product iteration had a 40 percent rejection rate among the pharmacists who needed it most. The AI was accurate. The system was not just.

Why? We designed it for a lab. It was not intended for a low-resource pharmacy with intermittent connectivity.

The system does not fit with handwritten records and a deep trust deficit with new technology. We rebuilt the framework to match the lived reality of the people it served. We considered their workflows, their constraints, and their dignity. As a result, adoption climbed to 92 percent.

That was not a technical fix. That was a moral one.

We did not change the algorithm. We changed the architecture of trust.

The Missing Layer

The EU AI Act is the most ambitious regulatory framework for artificial intelligence the world has seen. I support it. But it has a structural gap — and that gap is moral architecture.

A. Risk classification is necessary but not sufficient. A system categorized as “low risk” can still cause immense harm. This occurs if it is deployed in a context the regulators did not anticipate. Governance must be contextual, not just categorical.

B. Transparency without values is performative. Disclosing how a model works means nothing if the values embedded in it were never interrogated. You can publish every line of code and still build a system that harms.

C. Enforcement without moral clarity rewards the loudest, not the weakest. A rules-based framework without conscience protects those with the best lawyers, not those with the deepest needs. Sound familiar? The rules-based international order has the same flaw.

D. Builders must lead, not just regulators. Governments write rules. Builders write reality. Every AI system encodes values. The question is whether those values were chosen deliberately. They should be chosen by people who understand the communities those systems will touch. Or were they absorbed passively from training data shaped by the biases of the powerful?

The Builder’s Standard

Here is what I believe. Moral clarity in AI is not a luxury. It is infrastructure.

At RxAll, we built moral architecture into our system from day one. We did not wait for a regulation to tell us that patients deserve authentic medicine.

At StorsApp, we deploy AI-powered credit scoring. This system uses alternative data. It serves the 95 percent of entrepreneurs who are locked out of formal finance. We do this not because a compliance officer asked us to. We do this because exclusion is a design failure, not a market condition.

At Frontieres Bay Energies, we build solar cold chain solutions. They keep vaccines and food safe in places the grid does not reach. We believe infrastructure without conscience is just hardware.

Every builder who touches AI must ask three questions before a single line of code is written:

Who does this system serve? Who does it exclude? And did we choose those answers deliberately?

If you cannot answer all three, you are not governing. You are guessing.

The Choice

The world is racing to regulate artificial intelligence. The EU leads. The United States is close behind. India, the UK, and dozens of other nations are drafting their own frameworks.

But regulation alone will never be enough. A document without moral backbone is a suggestion, not a shield. The future of AI governance will not be shaped by the regulators who write the rules. It will be shaped by the builders who decide what those rules protect.

Compliance is what you do when someone is watching. Governance is what you build so it works when no one is.

The algorithms are already making decisions. The question is whether those decisions carry conscience — or just code.

Onwards.

Adebayo Alonge is the Founder and Group CEO of RxAll, StorsApp, and Frontieres Bay Energies. A Harvard Kennedy School Mason Fellow, Yale School of Management alumnus, and MIT Legatum Fellow, he builds AI-powered platforms that deliver healthcare, capital, and clean energy to underserved markets worldwide. He has raised $11M+ from Tier 1 VCs, driven $180M+ in product sales, and serves millions of patients monthly. He is a Fast Company World Changing Ideas 2025 honoree and winner of the Hello Tomorrow DeepTech Prize.

Discover more from Adebayo Alonge

Subscribe to get the latest posts sent to your email.